|

To do so, open the OpenSSL configuration file ( /etc/pki/tls/openssl.cnf), find the section in the file, and add this line under it (substitute in the ELK server’s private IP address for the IP_ADDRESS placeholder): subjectAltName = IP: IP_ADDRESS In this example, we are going to use Filebeat to ship logs from our client servers to our ELK server:Īdd the ELK Server’s private IP address to the subjectAltName (SAN) field of the SSL certificate on the ELK server. It is strongly recommended to create an SSL certificate and key pair in order to verify the identity of ELK Server.

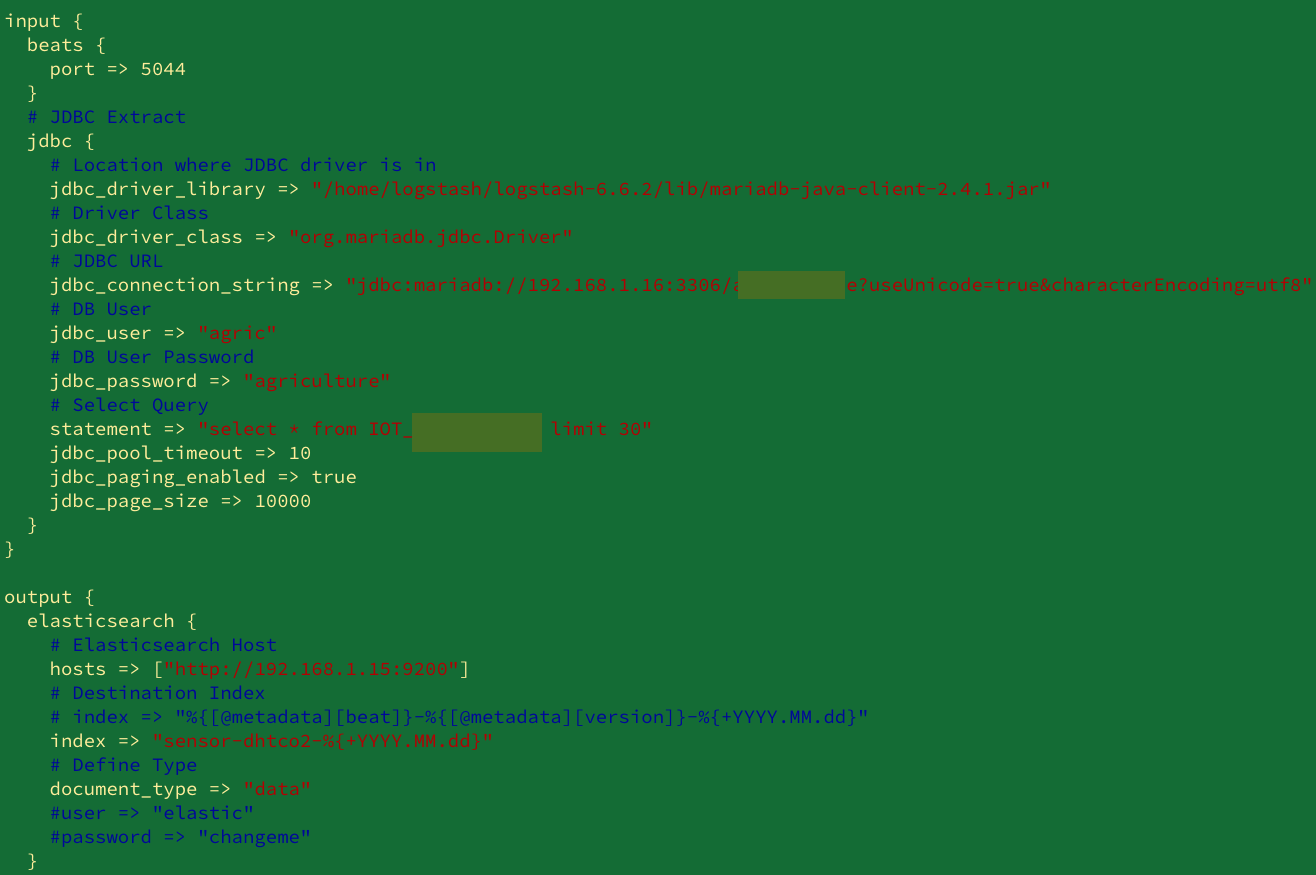

Let’s have look at these settings.Connect remotely to Logstash using SSL certificates If there is any matching key in the dictionary for the given key (userId), the corresponding value will be mapped to the “ destination” field. Each dictionary item is a key value pair.Īlright! Now what we need is a new field (in this case ‘ username’ ) and populate it with the corresponding dictionary value for the given userId (which is the key). Currently supported are YAML, JSON, and CSV files. Translate filter plugin is a general search and replace tool that uses a configured hash and/or a file to determine replacement values. Now Logstash Translate plugin comes into rescue. Since we already have the userId field extracted, what we need next is to have some sort of constant key-value data structure (can be a Map or a Dictionary) or some hardcoded constant array in the Logstash level. :)Īnother way to accomplish the label mapping is via the Logstash Translate plugin. Now you should be able to see the expected Bar chart visualization. For this example, I’m using Kibana in Elasticsearch service. Run Filebeat in debug mode to determine whether it’s publishing events successfully Make sure that Filebeat is able to send events to the configured output. You can change this behavior by specifying a different value for ignore_older. By default, Filebeat stops reading files that are older than 24 hours. Verify that the file is not older than the value specified by ignore_older. See Step 2: Configuring Filebeat for more information. Make sure the config file specifies the correct path to the file that you are collecting. Why isn’t Filebeat collecting lines from my file?įilebeat might be incorrectly configured or unable to send events to the output. Sudo docker logs -f - tail 500 logstash-test You may also tail the log of the Logstash Docker instance via, Now you can test and verify logstash plugins/GROK filters configurations.

(Later on, you can use nohup to run Filebeat as a background service or even use Filebeat docker)įinally, let’s just update the configured log file (/apps/test.log) and realtime Filebeat will pick the updated logs. Let’s run Filebeat via the following command. Continue sending 2019–10–24T10:26:0įollowing is the Filebeat.yml used in this example. Note: Make sure the docker ports used are not in use by other applications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed